Web 3.0, commonly referred to as “Web3”, the buzzword behind blockchain, cryptocurrency, non-fungible tokens (NFTs), and the so-called “next internet,” promises a lot:

- more control for users,

- fewer middlemen, and

- ownership of digital assets.

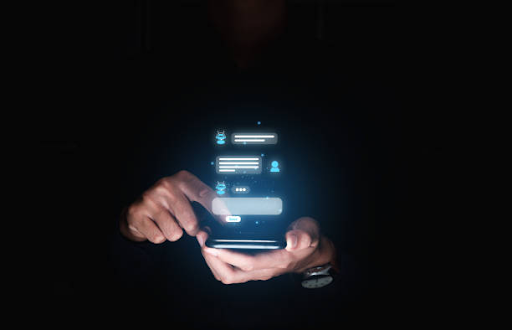

But for most people, trying to use a Web 3.0 application feels more like trying to crack a secret code than experiencing a revolution.

The vision is bold—but the experience? Often clunky, confusing, and downright frustrating. So, why does Web 3.0 still feel difficult, even for people who want to embrace it?

Join us as we explore the roadblocks users are facing.

Wallets: The First Frustration

In Web 3.0, everything starts with a wallet—your digital ID and your vault. But setting one up is not as simple as signing into Google. Instead, users are asked to download a browser extension or an app like MetaMask, create a seed phrase (a long string of random words), and store it somewhere safe. No password reset option here. If you lose your seed phrase, you lose everything.

A UX researcher from a 2023 Reddit thread shared this:

“We tested a decentralised (dApp) with first-time users. Most gave up at the wallet step. One said, ‘I feel like I am opening a bank account in another language.’”

Wallets are also fragmented. You may need different wallets for different blockchains (Ethereum, Solana, etc.), and some do not work on mobile. It is no wonder people feel overwhelmed before they even begin.

Gas Fees: Unexpected Charges That Drive Users Away

Once you get a wallet and want to do something—buy an NFT, send cryptocurrency, or vote in a decentralized autonomous organization (DAO)—you will often hit another wall: gas fees. These are transaction fees users pay to use the network. On Ethereum, they can jump from a few cents to $100+ depending on network activity.

In Web 2.0, users expect clear, predictable prices. In Web 3.0, the cost is not only confusing, it is constantly changing—and that breaks user trust.

Security Fears and No Customer Support

Web 3.0 gives users control, but with that comes risk. There is no “Forgot Password” button, no customer service line. Lose your wallet credentials? You are locked out forever. Fall for a scam link? Your assets are gone.

One user shared their experience on Wired:

“I clicked a link to mint a free NFT. It emptied my wallet. I did not even know what happened until hours later.”

Web 3.0 platforms still lack user-friendly protections like fraud warnings or confirmation prompts. To newcomers, it feels like walking a tightrope without a net.

Too Much Jargon, Not Enough Guidance

Try explaining “staking,” “bridging,” or “yield farming” to someone new, and you will see their eyes glaze over. Many Web 3.0 platforms assume a level of knowledge most users simply do not have.

A usability study published by Arounda Agency revealed that:

“Users often feel like they are a developer tool, not a consumer product.”

There are rarely tooltips, walkthroughs, or simple instructions.

Lack of User-Focused Design

Web 3.0 tools are often made by developers for developers. The design feels like an afterthought. Things like buttons, messages, and layouts can be unclear. In traditional tech, these issues would be caught and fixed through user testing. But in Web 3.0, the race to launch sometimes overrides usability.

What Needs to Change?

- Simplify Onboarding: New users should be guided like beginners, not expected to know cryptocurrency lingo or security practices from day one.

- Clear Fees: Show gas fees upfront and in simple terms. Offer suggestions like using cheaper times of day.

- Security Help: Include fraud alerts, help centers, and step-by-step recovery tools.

- Better Education: Glossaries, walkthroughs, and “learn as you go” design can make a huge difference.

User Testing: Build products that are actually tested with users—not just cryptocurrency enthusiasts.

Final Thoughts

Web 3.0 has powerful potential. But right now, it often feels like a technological demo—built for early adopters and engineers. For it to truly become what it is projected as, the user experience must become just as revolutionary as the technology behind it.

Until then, many users will continue to peek into the Web 3.0 world, only to walk away saying, “I do not get it.”